Reference no: EM131240211

Problem 1: Warmup

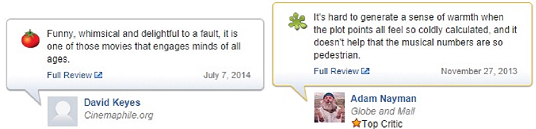

Here are two reviews of "Frozen," courtesy of Rotten Tomatoes (no spoilers!):

Rotten Tomatoes has classified these reviews as "positive" and "negative," respectively, as indicated by the intact tomato on the left and the splattered tomato on the right. In this assignment, you will create a simple text classification system that can perform this task automatically.

We'll warm up with the following set of four mini reviews, each labeled positive (+1) or negative (1):

1. (+1) pretty good

2. (1) bad plot

3. (1) not good

4. (+1) pretty scenery

Each review x is mapped onto a feature vector Φ(x), which maps each word to the number of occurrences of that word in the review. For example, the first review maps to the (sparse) feature vector Φ(x) = {pretty : 1, good : 1}. Recall the definition of the hinge loss:

Losshinge(x, y, w) = max{0, 1 - w · Φ(x)y},

where y is the correct label.

a. Suppose we run stochastic gradient descent, updating the weights according to

w ← w - η∇w Losshinge(x, y, w),

once for each of the four examples in order. After the classifier is trained on the given four data points, what are the weights of the six words ('pretty', 'good', 'bad', 'plot', 'not', 'scenery') that appear in the above reviews? Use η = 1 as the step size and initialize w = [0, . . . , 0]. Assume that ∇w Losshinge(x, y, w) = 0 is exactly 1.

b. Create a small labeled dataset of four minireviews using the words 'not', 'good', and 'bad', where the labels make intuitive sense. Each review should contain one or two words, and no repeated words. Prove that no linear classifier using word features can get zero error on your dataset. Remember that this is a question about classifiers, not optimization algorithms: your proof should be true for any linear classifier, regardless of how the weights are learnt.

After providing such a dataset, propose a single additional feature that we could augment the feature vector with that would fix this problem. (Hint: think about the linear effect that each feature has on the classification score.)

Problem 2: Predicting Movie Ratings

Suppose that we are now interested in predicting a numeric rating for each movie review. We will use a nonlinear predictor that takes a movie review x and returns σ(w ⋅ Φ(x)), where σ(z) = (1 + e-z)-1 is the logistic function that squashes a real number to the range [0, 1]. Suppose that we wish to use the squared loss.

a. Write out the expression for Loss(x, y, w).

b. Compute the gradient of the loss. Hint: you can write the answer in terms of the predicted value p = σ(w ⋅ Φ(x)).

c. Assuming y = 0, what is the smallest magnitude that the gradient can take? That is, find a way to set w to make ||∇Loss(x, y, w)|| as small as possible. You are allowed to let the magnitude of w go to infinity. Hint: try to understand intuitively what is going on and the contribution of each part of the expression. If you find doing too much algebra, you're probably doing something suboptimal.

Motivation: the reason that we're interested in the magnitude of the gradients is because it governs how far gradient descent will step. For example, if the gradient is close to zero when w is very far from the origin, then it could take a long time for gradient descent to reach the optimum (if at all); this is known as the vanishing gradient problem in training neural networks.

d. Assuming y = 0 , what is the largest magnitude that the gradient can take? Leave your answer in terms of ||Φ(x)||.

e. The problem with the loss function we have defined so far is that is it is non-convex, which means that gradient descent is not guaranteed to find the global minimum, and in general these types of problems can be difficult to solve. So let us try to reformulate the problem as plain old linear regression. Suppose you have a dataset D consisting of (x, y) pairs, and that there exists a weight vector w that yields zero loss on this dataset using the sigmoid as a prediction function. Show that there is an easy transformation to a modified dataset D′ of (x, y′) pairs such that performing least squares regression (using a linear predictor and the squared loss) on D′ converges to a vector w∗ that yields zero loss on D′. Concretely, write an expression for y′ in terms of y and justify this choice. This expression should not be a function of w.

For this part of the problem, assume that y is a real valued variable in the range (0, 1).

Problem 3: Sentiment Classification

In this problem, we will build a binary linear classifier that reads movie reviews and guesses whether they are "positive" or "negative."

a. Implement the function extractWordFeatures, which takes a review (string) as input and returns a feature vector Φ(x) (you should represent the vector Φ(x) as a dict in Python).

b. Implement the function learnPredictor using stochastic gradient descent, minimizing the hinge loss. Print the training error and test error after each iteration through the data, so it's easy to see if your code is working. You must get less than 4% error rate on the training set and less than 30% error rate on the dev set to get full credit.

c. Create an artificial dataset for your learnPredictor function by writing the generateExample function (nested in the generateDataset function). Use this to double check that your learnPredictor works!

d. When you run the grader.py on test case 3b-2, it should output a weights file and a error- analysis file. Look through 10 example incorrect predictions and for each one, give a onesentence explanation of why the classification was incorrect. What information would the classifier need to get these correct? In some sense, there's not one correct answer, so don't over think this problem; the main point is to get you to get intuition about the problem.

e. Now we will try a crazier feature extractor. Some languages are written without spaces between words. But is this step really necessary, or can we just naively consider strings of characters that stretch across words? Implement the function extractCharacterFeatures (by filling in the extract function), which maps each string of n characters to the number of times it occurs, ignoring whitespace (spaces and tabs).

f. Run your linear predictor with feature extractor extractCharacterFeatures. Experiment with different values of n to see which one produces the smallest test error. You should observe that this error is nearly as small as that produced by word features. How do you explain this?

Construct a review (one sentence max) in which character grams probably outperform word features, and briefly explain why this is so.

Problem 4: Kmeans clustering

Suppose we have a feature extractor Φ that produces 2dimensional feature vectors, and a toy dataset Dtrain ={x1, x2, x3, x4} with

1. Φ(x1) = [0, 0]

2. Φ (x2) = [0, 1]

3. Φ(x3) = [2, 0]

4. Φ(x4) = [2, 2]

a. Run 2means on this dataset. Please show your work. What are the final cluster assignments and cluster centers μ? Run this algorithm twice, with initial centers:

1. μ1 = [-1, 0] and μ2 = [3, 2]

2. μ1 = [1, -1] and μ2 = [0, 2]

b. Implement the kmeans function. You should initialize your k cluster centers to random elements of examples. After a few iterations of kmeans, your centers will be very dense vectors. In order for your code to run efficiently and to obtain full credit, you will need to precompute certain quantities. As a reference, our code runs in under a second on Myth, on all test cases. You might find generateClusteringExamples in util.py useful for testing your code.

c. Sometimes, we have prior knowledge about which points should belong in the same cluster. Suppose we are given a set S of example pairs (i, j) which must be assigned to the same cluster. For example, suppose we have 5 examples; then S = {(1, 2), (1, 4), (3, 5)} says that examples 1, 2, 4 must be in the same cluster and that examples 3 and 5 must be in the same cluster. Provide the modified kmeans algorithm that performs alternating minimization on the reconstruction loss.

Attachment:- Assignment.rar